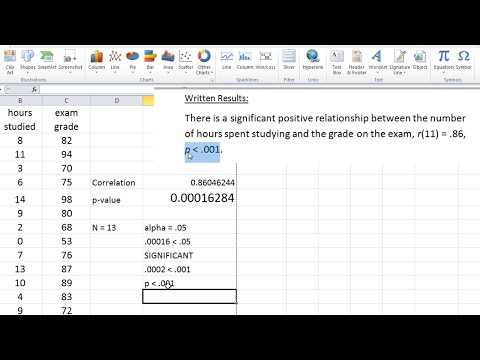

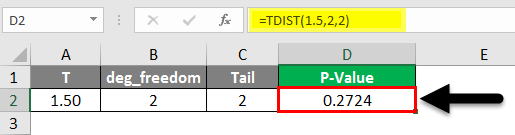

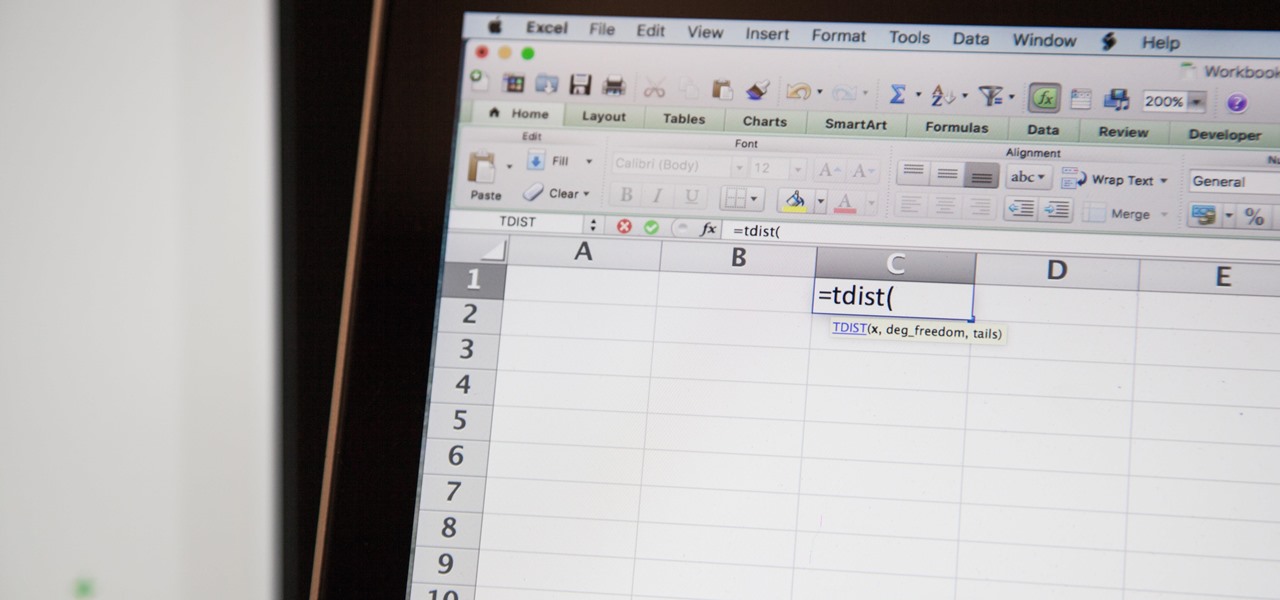

range2: Cell range of the second data set.range1: Cell range of the first data set.Purpose: We can use the T.TEST Function to calculate the p-value in Excel by directly adding the data ranges to the function. You can download this P-Value Excel Template here – P-Value Excel Template 1. Here are the three different ways or functions that we will use: In this section, we will see how to calculate P-Value in Excel using examples. Things To Remember About P-Value in Excel.Moreover, there is another method: Analysis ToolPak, that basically simplifies the T.TEST function for us. Although Microsoft does not have any specific or direct formula for p-value in Excel, we can use functions like T.TEST and T.DIST for the calculation. Statisticians can use Excel to quickly and easily calculate the p-value. Conversely, if the p-value is less than 0.05 (5%), it indicates that the two data groups are unrelated, supporting the initial null hypothesis assumption. Therefore, the initial assumption of the null hypothesis was incorrect. For instance, if the p-value is greater than the significance level (α) of 0.05 (5%), it suggests that the two data groups are indeed related. Then they conduct various statistical tests, including the p-value, and interpret the results.

This serves as their initial assumption for the experiment. They start by considering a null hypothesis, which assumes there is no relationship between the two data groups. Statisticians and researchers commonly use the p-value when they want to analyze two data groups.

It plays a vital role in analyzing real-world issues in areas like medicine, economics, and human study. The p-value in Excel is a statistical measure that checks if the correlation between the two data groups is caused by important factors or just by coincidence. As you can imagine you can also go from a p-value to a 95% confidence interval by extending these methods in the opposite direction, but in practice it is somewhat unlikely an author would report an effect size and p-value while leaving out the 95% confidence interval. Hopefully these are good examples to get you started. Usually the two-sided p-value is reported: p=0.012 (two-sided) (5) Look up the z score using Python, R (ex: 2*pnorm(-abs(z))), Excel (ex: 2*1-normsdist( z score)), or an online calculator to get the p-value. (4) Divide the log odds ratio by the standard error estimate to get a z score: 0.25/0.10=2.50 (3) Divide the difference by 1.96 (for a 95% CI) to get the standard error estimate: 0.2/1.96=0.10 (2) Subtract the lower limit from the upper limit and divide by 2: (0.45-0.05)/2=0.2 Suppose we have an odds ratio and 95 percent confidence interval of 1.28 (1.05, 1.57). Usually the two-sided p-value is reported: p=0.015 (two-sided)įor an odds ratio, things are a bit trickier because we need to first take the natural log of the estimate and 95% confidence interval before we can carry out the back calculation of the standard error for calculating the p-value. (4) Look up the z score using Python, R (ex: 2*pnorm(-abs(z))), Excel (ex: 2*1-normsdist( z score)), or an online calculator to get the p-value.

(3) Divide the risk difference estimate by the standard error estimate to get a z score: 3.60/1.48=2.43 (2) Divide the difference by 1.96 (for a 95% CI) to get the standard error estimate: 2.9/1.96=1.48 (1) Subtract the lower limit from the upper limit to get the difference and divide by 2: (6.50-0.70)/2=2.9 Suppose we have an estimate of a risk difference and a respective 95 percent confidence interval of 3.60 (0.70, 6.50). Below are two examples to illustrate how to do this. Therefore, if we are given an effect size and confidence interval all we need to do is back calculate the standard error and combine that with the effect size to get the z score used to calculate the p-value. For the p-value, we just take the effect estimate and divide it by the standard error of the effect estimate to get a z score from which we can calculate the p-value. Assuming we are dealing with a 95% CI, we would take the effect size and subtract/add 1.96 times the standard error of the effect size to get our lower and upper bounds of the confidence interval. To do so, we need to remember the basic equations for the confidence interval and the calculation of a p-value. Sometimes, however, it is of interest to back calculate a p-value from a confidence interval if the p-value is not reported in the manuscript. Whenever possible, I advocate to include a CI when reporting an estimated effect size. Confidence intervals (CIs) are useful statistical calculations to help get a level of certainty around an estimated effect size.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed